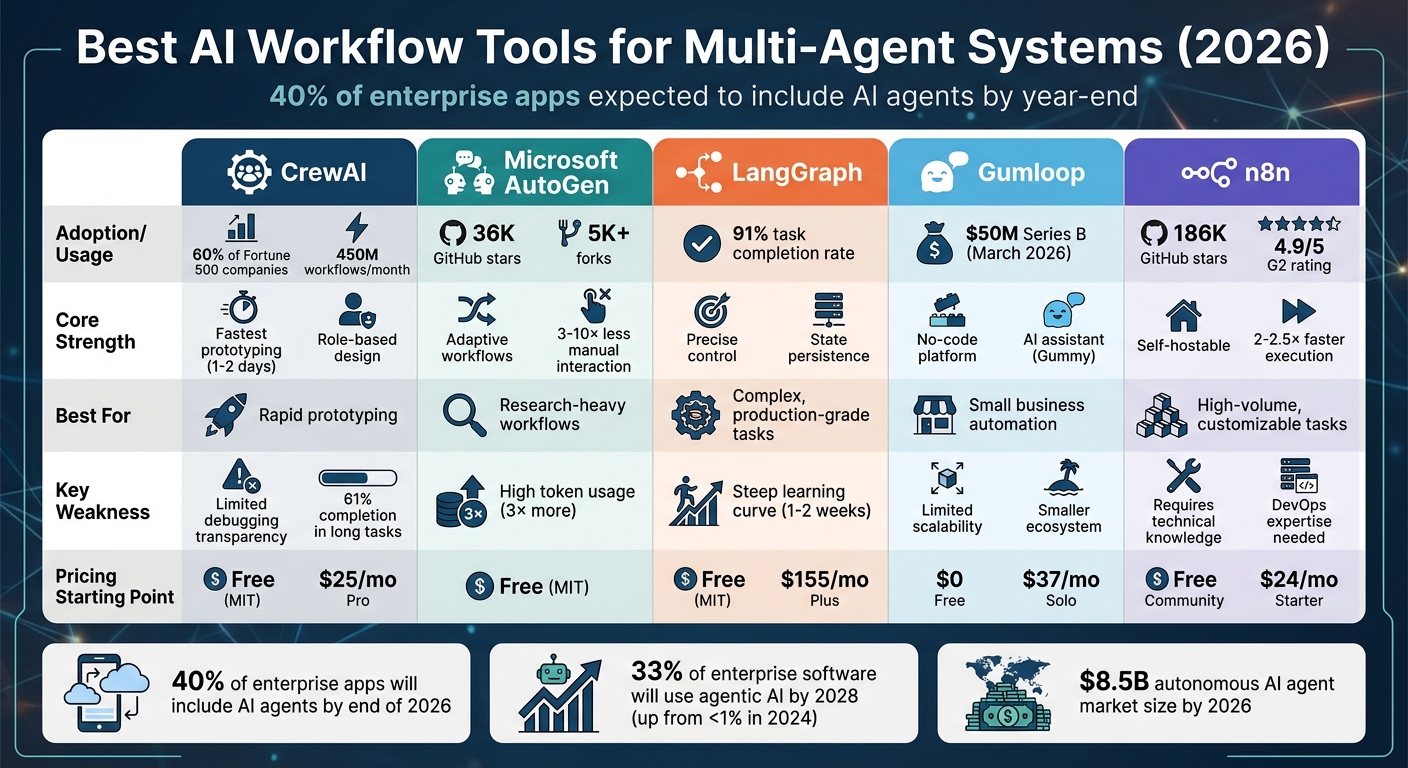

Best AI Workflow Tools for Multi-Agent Systems

Compare five top AI workflow platforms for multi-agent orchestration—tradeoffs in scalability, state persistence, cost, and prototyping speed.

Managing multiple AI agents is complex, but specialized tools make it easier. In 2026, multi-agent systems are becoming essential, with 40% of enterprise apps expected to include AI agents by year-end. The real challenge? Ensuring agents work together without errors. Below are five tools designed to streamline multi-agent workflows:

- CrewAI: Role-based design for quick prototyping. Used by 60% of Fortune 500 companies.

- Microsoft AutoGen: Message-driven framework, now transitioning to Microsoft Agent Framework for stability.

- LangGraph: Graph-based workflows offering precise control for stateful, long-running tasks.

- Gumloop: No-code platform for non-technical users, ideal for simpler workflows.

- n8n: Flexible, self-hostable solution with execution-based pricing, great for high-volume tasks.

Quick Comparison

| Tool | Strengths | Weaknesses | Best For |

|---|---|---|---|

| CrewAI | Fast setup, role-based design | Limited transparency in debugging | Rapid prototyping |

| AutoGen | Dynamic, modular workflows | High token usage | Research-heavy workflows |

| LangGraph | Precise control, state persistence | Steep learning curve | Complex, production-grade tasks |

| Gumloop | User-friendly, no-code interface | Limited scalability | Small business automation |

| n8n | Self-hostable, cost-efficient | Requires technical knowledge | High-volume, customizable tasks |

Choosing the right tool depends on your needs. For quick prototypes, go with CrewAI. For detailed, stateful workflows, LangGraph is ideal. Non-technical teams may prefer Gumloop, while n8n suits those needing a scalable, flexible solution.

AI Workflow Tools Comparison: Features, Strengths, and Best Use Cases

n8n AI Agent Tutorial | Building Multi Agent Workflows

sbb-itb-f123e37

1. CrewAI

CrewAI operates on a role-based model, assigning each agent a specific role, goal, and backstory. These agents collaborate using structured processes - whether sequential, hierarchical, or consensual. This setup simplifies adoption, allowing developers to become proficient in just 1–2 days.

The platform organizes actions into two components: "Crews" for autonomous execution and "Flows" for managing states. This structure enables the creation of production-ready applications while maintaining agent autonomy. For handling large datasets, CrewAI automatically manages context windows by summarizing conversation history when token limits are reached. It also integrates RAG (retrieval-augmented generation) tools to efficiently query extensive document stores. This design ensures smooth multi-agent collaboration, as highlighted in the following features.

Multi-Agent Support

CrewAI includes a "Manager" agent that oversees interactions and delegates tasks. It also provides built-in memory systems - short-term, long-term, and entity memory - allowing agents to maintain context across complex workflows. Additionally, task guardrails ensure outputs meet specific requirements, delivering consistent and repeatable results, which is a must for production environments.

As of April 2026, CrewAI handles over 450 million agentic workflows monthly and is utilized by 60% of Fortune 500 companies. For instance, General Assembly used CrewAI to streamline curriculum design, cutting development time for a critical phase by 90%.

"We achieved a 90% reduction in development time for a critical phase of our process with CrewAI, motivating us to build agentic workflows for additional use cases"

- Chris Giordano, Director of Learning and Program Development, General Assembly.

Scalability

CrewAI's role-based model scales effortlessly through its AMP (Agent Management Platform) framework. AMP enables automatic serverless scaling, whether deployed in the cloud or on-premises. It supports both sequential and parallel task execution, allowing multiple agents to work simultaneously. To prevent exceeding API rate limits, agents can be configured with a maximum requests-per-minute (max_rpm) setting.

For example, DocuSign reduced lead time-to-first-contact by 75% using CrewAI to automate lead data extraction and evaluation. PwC also saw significant improvements, increasing code-generation accuracy from 10% to 70% and reducing project turnaround times. The platform continues to grow, attracting over 4,000 new sign-ups weekly and supporting a community of more than 100,000 certified developers.

LLM Compatibility

CrewAI supports a wide range of large language model (LLM) providers, including OpenAI, Anthropic (Claude), Azure OpenAI, Google (Gemini), and local models via Ollama and LM Studio. It uses LiteLLM by default to connect to these providers but allows for custom configurations. The system is designed to minimize token usage and API calls, keeping operational costs low during multi-agent interactions.

Pricing Tiers

CrewAI offers an open-source version under the MIT License. The Free Tier includes 50 executions per month and one live deployed crew. The Professional plan costs $25 per month for 100 executions, with additional executions priced at $0.50 each. Higher-tier plans start at $49/month or $99/month, depending on the features included. Enterprise pricing is customized and offers advanced options like SOC2 compliance, SSO, RBAC, and VPC deployment. This straightforward pricing model helps organizations scale costs predictably as usage grows.

Primary Strengths and Weaknesses

CrewAI's biggest strength is its ability to support rapid prototyping through its intuitive role-based design. The YAML/Python hybrid configuration makes it easy for non-technical managers to define agent roles, while real-time tracing provides visibility into every step of the process - from task interpretation to tool usage. In specific QA tasks, CrewAI has shown execution speeds up to 5.76x faster than LangGraph.

However, the platform's "black box" nature can be a drawback. Unlike graph-based frameworks, it can be difficult to determine why an agent fails in certain scenarios. This lack of transparency can complicate debugging, especially for intricate edge cases. While the high-level abstraction speeds up development, it may limit the deterministic control developers sometimes need.

2. Microsoft AutoGen

Microsoft AutoGen introduces a dynamic approach to multi-agent systems by using a conversational agent design. Instead of sticking to fixed roles, agents interact through message exchanges to tackle tasks collectively. The framework includes pre-configured agents like AssistantAgent for language-related tasks and UserProxyAgent for code execution and monitoring [27,29]. This innovative messaging-based structure has significantly reduced manual interactions - by 3× to 10× - and cut coding efforts by over four times.

The system's adaptability is highlighted by its various orchestration patterns. For instance, RoundRobinGroupChat rotates agents in a set order, while SelectorGroupChat relies on a large language model (LLM) to decide the next speaker based on the situation. The Swarm pattern delegates tasks to specialized agents, and Magentic-One uses an "Orchestrator" agent to maintain a task ledger and oversee agents like WebSurfer and Coder [30,31]. Doug Burger, a Technical Fellow at Microsoft, remarked:

"Capabilities like AutoGen are poised to fundamentally transform and extend what large language models are capable of. This is one of the most exciting developments I have seen in AI recently."

This message-driven framework is the backbone of effective multi-agent collaboration.

Multi-Agent Support

AutoGen stands apart from role-based systems with its message-driven model, which supports both centralized and distributed orchestration. The framework has gained considerable traction, boasting 36,000 stars on GitHub, over 5,000 forks, 400+ contributors, and millions of Python package downloads. Version 0.4 marked a major shift by introducing an asynchronous, event-driven architecture, enabling agents to work across multiple processes, machines, and even organizations [28,32]. This update replaced direct function calls with structured message passing, paving the way for high-throughput workflows in enterprise settings.

AutoGen also includes built-in observability through OpenTelemetry integration, offering distributed tracing and logging for complex agent interactions [28,32]. Cross-language compatibility allows Python and .NET agents to collaborate seamlessly, so teams can work in their preferred programming languages while maintaining a unified system [32,33]. For workflows that run over extended periods, AutoGen can save and restore task progress, ensuring no context is lost during interruptions [31,33].

Scalability

Thanks to its asynchronous design, AutoGen can scale horizontally across different infrastructures. For enterprise use, it integrates with Azure AI Foundry, providing managed hosting, autoscaling, and load balancing. Its pay-as-you-go model includes Microsoft Entra ID authentication and content safety features for production environments. However, in April 2026, Microsoft announced that AutoGen would enter maintenance mode. They now recommend the Microsoft Agent Framework (MAF) as a more stable successor with long-term support, standardized APIs, and interoperability through A2A and MCP protocols. While AutoGen remains a strong choice for research and prototyping, MAF is better suited for production environments requiring guaranteed stability.

LLM Compatibility

AutoGen is model-agnostic, supporting platforms such as OpenAI (GPT-4o, o1‑preview), Azure OpenAI, Anthropic Claude, Google Gemini, Mistral, and local models through Ollama. It also supports the Model Context Protocol, which ensures consistent tool interoperability [26,32,36]. This versatility allows developers to combine models based on their needs - using advanced reasoning models for orchestration tasks and lighter models for routine operations.

Pricing Tiers

AutoGen is free to use under the MIT License. Operational costs depend on the LLM API provider, whether OpenAI, Azure OpenAI, or another service. Azure AI Foundry offers managed hosting with enterprise-grade features on a pay-as-you-go basis, with pricing determined by resource usage and selected service tiers.

Primary Strengths and Weaknesses

AutoGen's standout feature is its flexibility and modular design. Its layered structure - comprising the Core (event-driven framework), AgentChat (conversational API), and Extensions (pluggable components) - enables developers to create anything from simple assistants to complex distributed systems [26,28]. Features like Docker-based sandboxed code execution enhance security, while built-in human-in-the-loop support ensures critical oversight [28,37]. These capabilities earned AutoGen the "Best Multi-Agent Framework" award from AI Tools Atlas in January 2026.

However, there are challenges. Many users report a steep learning curve when dealing with complex orchestration. The transition from version 0.2 to 0.4 introduced breaking changes, causing difficulties for ongoing projects [28,32]. Additionally, AutoGen Studio, the no-code interface, is still in preview, which limits its accessibility for non-technical users.

3. LangGraph

LangGraph organizes workflows as directed cyclic graphs (DCGs), where each node represents a Python function (such as agents or tools), and edges define the transitions between them. Unlike traditional linear workflows, this graph-based structure supports loops and non-linear logic. This flexibility is crucial for scenarios where agents need to retry actions, correct errors, or pause for human input. At its core, LangGraph uses a "StateGraph" parameterized by schemas (like TypedDict or Pydantic) and includes built-in tools like PostgresSaver to persist states at every "super-step". This ensures workflows can recover from process restarts and enables "time-travel debugging", which allows developers to revisit and re-execute previous nodes.

"LangGraph sets the foundation for how we can build and scale AI workloads - from conversational agents, complex task automation, to custom LLM-backed experiences that 'just work'." - Garrett Spong, Principal Software Engineer

A standout feature is the interrupt() primitive, which lets agents pause execution until human approval is received. This makes LangGraph particularly useful in industries with strict compliance requirements. Its immutable checkpoint logs meet EU AI Act Article 14 auditing standards, making it a reliable choice for high-risk applications. This graph-based design also lays the groundwork for advanced multi-agent capabilities.

Multi-Agent Support

LangGraph's architecture is ideal for managing complex multi-agent systems, offering several powerful features. Its parallel execution capability enables "fan-out/fan-in" patterns, where multiple sub-agents can run simultaneously. This approach often cuts latency by 50-70% in tasks like research and analysis. For larger systems, subgraph composition allows workflows to be broken into smaller, testable subgraphs that communicate via defined state interfaces, much like microservices.

The framework's efficiency is evident in benchmarks, achieving a 91% task completion rate in sequential tool-use scenarios involving five or more consecutive tool calls. LangGraph also supports an open Agent Protocol, enabling seamless communication between agents across frameworks like CrewAI and Microsoft Agent Framework. This interoperability is especially useful for enterprises managing diverse AI systems.

Scalability

LangGraph is built to scale horizontally by using stateless workers and a persistent state backend, such as PostgreSQL, Redis, or DynamoDB. Its "Durable Agent Execution" feature allows agents to pause for extended periods (even days) while waiting for human input, and it can survive server restarts. For large-scale use, LangGraph employs a hybrid architecture that combines a Cloud Control Plane with a VPC Data Plane.

However, scalability isn't without its challenges. Developers must manually implement parallel execution logic, as it isn't automated. Long-running workflows can also lead to unbounded state growth, which slows down serialization. For instance, a 10-turn conversation with 5 nodes per turn generates 50 checkpoints, necessitating strict retention policies to control storage costs. Additionally, while LangGraph is reliable, its instantiation can be up to 529x slower than some high-performance alternatives, though it remains a go-to for stateful workflows.

LLM Compatibility

LangGraph supports a wide range of models, including OpenAI (GPT-4o, GPT-5), Anthropic, Azure OpenAI, and local models via Ollama. This flexibility allows developers to orchestrate multiple models within a single workflow, optimizing for specific tasks. For example, a complex reasoning task might use GPT-4o, while simpler validation steps could rely on Llama 3.2 3B to save on costs and reduce latency. Importantly, developers can swap LLM providers without altering the underlying workflow logic, offering the freedom to balance performance, cost, and speed.

Pricing Tiers

The LangGraph core library is free under an MIT license. For managed deployments, LangGraph Cloud offers three pricing tiers as of 2026:

- Developer tier: Free, with 100,000 nodes per month.

- Plus tier: Starts at $155/month, includes standby availability, and charges $0.001 per node execution.

- Enterprise tier: Custom pricing with SLA guarantees.

Additionally, observability through LangSmith costs $0.50 per 1,000 traces after the free tier of 10,000 traces/month is exceeded.

Primary Strengths and Weaknesses

LangGraph shines with its explicit state control and precise orchestration, making it a reliable choice for mission-critical workflows like insurance claims or legal document processing. Its integration with LangSmith provides real-time monitoring of agent decisions, token usage, and latency, enhancing its utility for enterprise applications.

"LangGraph has been instrumental for our AI development. Its robust framework for building stateful, multi-actor applications with LLMs has transformed how we evaluate and optimize the performance of our AI guest-facing solutions." - Andres Torres, Senior Solutions Architect

That said, the framework has a steep learning curve, requiring developers to understand graph theory. Mastery typically takes 1-2 weeks. Another limitation is that LangGraph doesn't inherently prevent infinite loops, so developers must design safeguards like iteration limits or timeout nodes. Using a durable checkpoint store, such as Redis or Postgres, is also essential in production; otherwise, process crashes can result in lost state.

4. Gumloop

Gumloop provides a no-code platform designed for multi-agent workflows, making it accessible to non-technical users like marketers, freelancers, and small business teams. Through its visual drag-and-drop interface, users can create AI-powered processes without needing to write any code. Its standout feature, the "Gummy Assistant", allows users to describe tasks in plain English, which the platform then translates into workflow nodes and logic automatically. This makes it a user-friendly alternative to code-heavy platforms while introducing a distinctive multi-agent delegation system.

"Understanding a task should be the only prerequisite to automating it." - Gumloop

Multi-Agent Support

Gumloop's approach to multi-agent systems revolves around a delegation model, similar to building a multi-agent research team with specialized frameworks. Here, a parent agent can use the invoke_agent tool to create subagents for specific tasks. These subagents can operate in parallel, with a cloning depth limited to one level. To ensure efficiency, subagents automatically time out after 15 minutes. A central coordinator assigns tasks to specialized agents, leveraging their specific expertise. Parent agents can also transfer files to subagents and review their completed work, including conversation transcripts.

For batch operations, a shared progress board monitors the status of subagents, while sibling agents can communicate through broadcast notes. Additionally, agents have the ability to improve their prompts based on user feedback and reference past interactions for context. Gumloop's Code Sandbox, which includes over 80 pre-installed Python packages, ensures state preservation across tool calls. This delegation and cloning system enhances efficiency and complements the platform's no-code approach to workflow design.

Scalability

Gumloop's architecture is tailored for quick prototyping and simpler automation tasks. It handles linear workflows effectively but faces challenges with more intricate needs, such as advanced branching logic, reusable components, or the rigorous testing required for production-level systems. For enterprise users, the platform offers features like Virtual Private Cloud (VPC) deployments, role-based access control (RBAC), usage tracking, and a dedicated security layer called Gumstack. However, the platform's concurrent execution limits - 2 for Free, 4 for Solo, and 5 for Team plans - can constrain its use for more demanding applications.

LLM Compatibility

Gumloop integrates with leading large language model (LLM) providers, allowing users to connect their own API keys and choose from various AI models based on their subscription tier. Enterprise plans include additional controls to manage AI model usage and governance.

Pricing Tiers

As of 2026, Gumloop offers four subscription plans:

- Free/Starter: $0/month, includes 2,000 credits, 1 seat, 1 active trigger, and 2 concurrent runs.

- Solo: $37/month, offers 10,000+ credits, unlimited triggers, 4 concurrent runs, and API key support.

- Team: $244/month, includes 60,000+ credits, 10 seats, 5 concurrent runs, unified billing, and Slack integration.

- Enterprise: Custom pricing, featuring RBAC, audit logs, VPC deployment, SCIM/SAML integration, and advanced AI model controls.

Running an agent in a workflow costs 3 credits per run, with additional fees for model and tool executions. Companies can reduce token costs by up to 40% through optimized orchestration.

Primary Strengths and Weaknesses

Gumloop's intuitive interface and Gummy Assistant make it easy for users to automate simple tasks, such as PDF parsing, without technical expertise. However, its limited capacity for handling complex workflows and a smaller library of templates and tutorials can make troubleshooting more advanced processes difficult. These limitations may prompt users to consider alternative solutions as their needs grow.

"Gumloop isn't just about connecting apps; it's about embedding AI into every step." - Vellum AI Blog

In March 2026, Gumloop secured $50 million in Series B funding, led by Benchmark, to expand its AI automation framework. This investment highlights the growing confidence in no-code AI solutions.

5. n8n

n8n stands out among AI workflow orchestration tools for its flexible and scalable design. It allows users to automate multi-agent workflows through an intuitive visual interface, while also supporting custom JavaScript or Python code. With over 186,000 stars on GitHub and a 4.9/5 rating on G2, it’s a favorite among developers. n8n connects to virtually any API using its HTTP Request node and offers more than 1,000 native integrations.

"n8n was the big unlock. Tools like ChatGPT and Claude are great, but n8n is the thing that allows you to integrate AI into your work and your processes in a safe and controlled way." - Ollie Scheers, Chief Technology Officer, Huel

Multi-Agent Support

n8n enables multi-agent workflows using features like the "Routing by Branch" classifier and the "Orchestrator Pattern", which assigns tasks through the AI Agent Tool Node. Its modular approach aligns with the Single Responsibility Principle, ensuring each agent focuses on specific tasks, such as ERP access or quality assurance.

The AI Agent Tool Node also supports hierarchical systems, where a "supervisor" agent manages specialized sub-agents. This setup combines deterministic nodes for enforcing strict rules with agentic nodes for tasks requiring natural language reasoning. Acting as both a Model Context Protocol (MCP) client and server, n8n standardizes interactions between agents and external tools.

For debugging, n8n offers visual execution logs, trace IDs, and metadata capture. Studies indicate multi-agent systems can outperform single agents by 90.2%, though they may consume up to 15 times more tokens. To manage costs, teams can assign complex reasoning tasks to expensive models while using efficient models for simpler operations. These techniques make n8n a strong choice for scaling high-volume workflows.

Scalability

n8n’s Queue Mode, powered by Redis, separates scheduling from execution, enabling horizontal scaling with multiple worker processes or Kubernetes. This ensures workflows run concurrently without interference. Users can monitor queue depth and worker performance to adjust resources dynamically.

Vodafone’s 2025 implementation of n8n transformed its threat intelligence and cyber operations, saving £2.2 million by blending low-code workflows with custom integrations. Similarly, Huel used n8n to automate tasks across tools, saving 1,000 hours of manual work and fostering an "AI-first" culture.

"n8n provides SOAR capability and workflows in a low-code model, as well as the ability to code for more complex workflows and integrations. It did everything that we wanted, all in one tool." - Claire Van Hinsbergh, Cyber Operations Engineering Manager, Vodafone

Unlike platforms that charge per task, n8n uses a per-execution billing model, covering entire workflow runs. This can be more economical for complex systems. However, performance may drop when processing datasets larger than 100,000 rows in a single workflow.

LLM Compatibility

n8n integrates seamlessly with various large language model (LLM) providers, enhancing its workflow capabilities. It supports OpenAI, Anthropic, Google Gemini, Mistral, DeepSeek, and local models via Ollama, allowing users to pay LLM providers directly.

Pricing Tiers

n8n offers four deployment options:

| Plan | Cost | Executions | Features |

|---|---|---|---|

| Community | Free | Unlimited | Self-hosted, full source code access |

| Starter | $24/month | 2,500 | Cloud hosting, basic support |

| Pro | $60/month | 10,000 | Advanced features, priority support |

| Enterprise | Custom | Custom | SSO, audit logs, RBAC, dedicated support |

The Community Edition is particularly appealing for businesses requiring strict compliance, like HIPAA or GDPR, as it offers full source code access and unlimited executions.

Primary Strengths and Weaknesses

n8n’s self-hosting option ensures data stays secure, keeping sensitive information and API keys within a company’s private cloud. Its combination of a visual interface and code-level control makes it ideal for teams balancing rapid prototyping with customization.

However, n8n demands more technical expertise than other no-code platforms, requiring users to understand JSON, HTTP APIs, and basic coding. Occasional non-descriptive error messages can also pose challenges. While some competitors offer a broader range of integrations, n8n’s HTTP Request and Code nodes provide a flexible, cost-efficient way to connect to any API, making it a strong contender for handling high-volume workflows.

Pros and Cons

Choosing the right AI workflow tool means weighing its strengths against its limitations, especially when it comes to multi-agent orchestration. Here's a closer look at how some of the leading tools stack up:

LangGraph stands out for its graph-based, stateful design, which offers unmatched control over workflows. It boasts an impressive 91% task completion rate in sequential tool-use benchmarks, the highest among major frameworks. However, debugging can be time-intensive, with a median time of 47 minutes.

CrewAI, on the other hand, shines in rapid prototyping thanks to its role-based design, making it intuitive and quick to set up. That said, its performance tends to dip in long-horizon tasks, with completion rates dropping from 84% to 61% after 12 steps due to challenges with context accumulation.

Microsoft AutoGen (now integrated into the Microsoft Agent Framework) is well-suited for adaptive, research-heavy workflows. It maintains an 88% completion rate for long-horizon tasks. But its conversational approach comes at a cost - it can consume three times more tokens than single-pass workflows, which can lead to higher API expenses.

Gumloop takes a different approach with its AI assistant, "Gummie", enabling visual workflow creation. While it simplifies the process for users, its newer ecosystem means fewer integrations and a smaller community compared to more established platforms.

n8n is a favorite among technical teams that value infrastructure flexibility. Its execution-based pricing model is particularly cost-effective for complex workflows, as a 200-step process counts as just one execution, avoiding the high costs of task-based alternatives. Benchmarks show that n8n runs workflows 2–2.5× faster due to reduced orchestration overhead. The trade-off? A steeper learning curve requiring familiarity with JSON, HTTP APIs, and coding, as well as DevOps expertise for self-hosting.

"LangGraph gives you the most control over complex branching workflows. CrewAI gets you to a working multi-agent prototype fastest." - Kai Renner, Senior AI/ML Engineering Leader

Here's a quick comparison of the tools' core features and drawbacks:

| Tool | Multi-Agent Support | Scalability | LLM Compatibility | Core Strength | Core Weakness |

|---|---|---|---|---|---|

| LangGraph | Excellent (Graph-based) | Excellent (State persistence) | Universal | Precise control over cycles and loops | Steep learning curve; high debugging time |

| CrewAI | High (Role-based teams) | Moderate (Sequential bottlenecks) | Universal | Fastest prototyping; intuitive roles | Performance drops in long tasks; buggy parallel mode |

| Microsoft AutoGen | High (Conversational) | Moderate (High token cost) | Universal (Azure-optimized) | Adaptive workflows; human-in-the-loop | Difficult to enforce determinism; verbose setup |

| n8n | Moderate (Node-based) | High (Execution-based pricing) | Universal (Any model/local) | Self-hostable; cost-effective for loops | Requires DevOps for self-hosting |

| Gumloop | Moderate (Visual subflows) | Moderate (Credit-based) | Major LLMs | AI-assisted building; no-code | Newer ecosystem; marketing-biased templates |

The best choice depends on your team's skills and the complexity of the tasks at hand. LangGraph is ideal for production-grade systems requiring precise control, while CrewAI is perfect for quickly creating prototypes. If your workflows are dynamic and research-intensive, AutoGen is a strong contender - just be mindful of token usage. For cost-effective, high-volume workflows, n8n is a solid option, especially for those with the technical know-how to manage self-hosting. Lastly, Gumloop offers an approachable solution for teams without coding expertise, particularly in marketing and operations.

Conclusion

Choosing the right tool depends on your specific needs and priorities, especially when balancing complexity, speed, and cost.

If you're working on production-grade systems that demand precise control over intricate decision trees and state management, LangGraph stands out as the go-to option. It's trusted by major companies like Elastic and Replit, though it requires a ramp-up period of 2–3 weeks.

For those prioritizing rapid prototyping or looking for budget-friendly options, CrewAI delivers prototypes in about one week. Meanwhile, n8n provides a cost-efficient, long-term solution with its execution-based pricing model and self-hosting capabilities.

If your organization is heavily invested in Azure, the Microsoft Agent Framework is a strong contender. It already supports over 230,000 organizations through its integration with the Copilot Studio ecosystem, with a planned 1.0 GA release in Q1 2026.

Looking ahead, the potential for agentic AI is enormous. Gartner forecasts that by 2028, 33% of enterprise software applications will incorporate agentic AI, a significant leap from less than 1% in 2024. With 60% of Fortune 500 companies already adopting multi-agent orchestration as of early 2026, starting small with single-agent workflows can help prove value before scaling. Be prepared to allocate three times your estimated LLM API costs in the first quarter of deployment. The autonomous AI agent market is also expected to reach $8.5 billion by 2026.

The right choice will enable seamless multi-agent orchestration, giving your organization a competitive edge while driving efficiency.

FAQs

How do I decide between role-based, message-driven, and graph-based agent orchestration?

Choosing the right orchestration method - whether role-based, message-driven, or graph-based - depends on your system's specific needs for complexity, scalability, and control:

- Role-based: Works well for systems where structured collaboration and clearly defined roles are essential.

- Message-driven: Fits systems that prioritize flexibility and seamless communication between components.

- Graph-based: Designed for handling intricate workflows, especially those with multiple dependencies and scalability demands.

Each method serves different architectural goals, so it's important to align your choice with the unique demands of your system.

What’s the simplest way to reduce token usage and API costs in multi-agent workflows?

To save on token usage and API costs, consider implementing multi-model routing. This means assigning tasks to the most suitable model: use advanced models for planning or complex tasks, and opt for smaller, more efficient models for simpler sub-tasks. This approach can lower token costs by 30–60%.

Other strategies include simplifying prompts, narrowing the scope of agent responsibilities, and caching commonly used code patterns. You can also use adaptive supervision frameworks to reduce unnecessary API calls while still maintaining high success rates.

What do I need for reliable long-running agents (state, retries, and human approvals)?

Reliable long-running agents depend on robust systems for managing their state, implementing retry processes, and incorporating workflows that allow for human approvals. Platforms designed for state persistence and orchestration play a key role here, enabling agents to effectively manage failures and handle approvals when needed.