Proactive AI Risk Detection with Stakeholder Input

Proactively detect AI risks using stakeholder input, continuous monitoring, and predictive analytics to prevent breaches and bias.

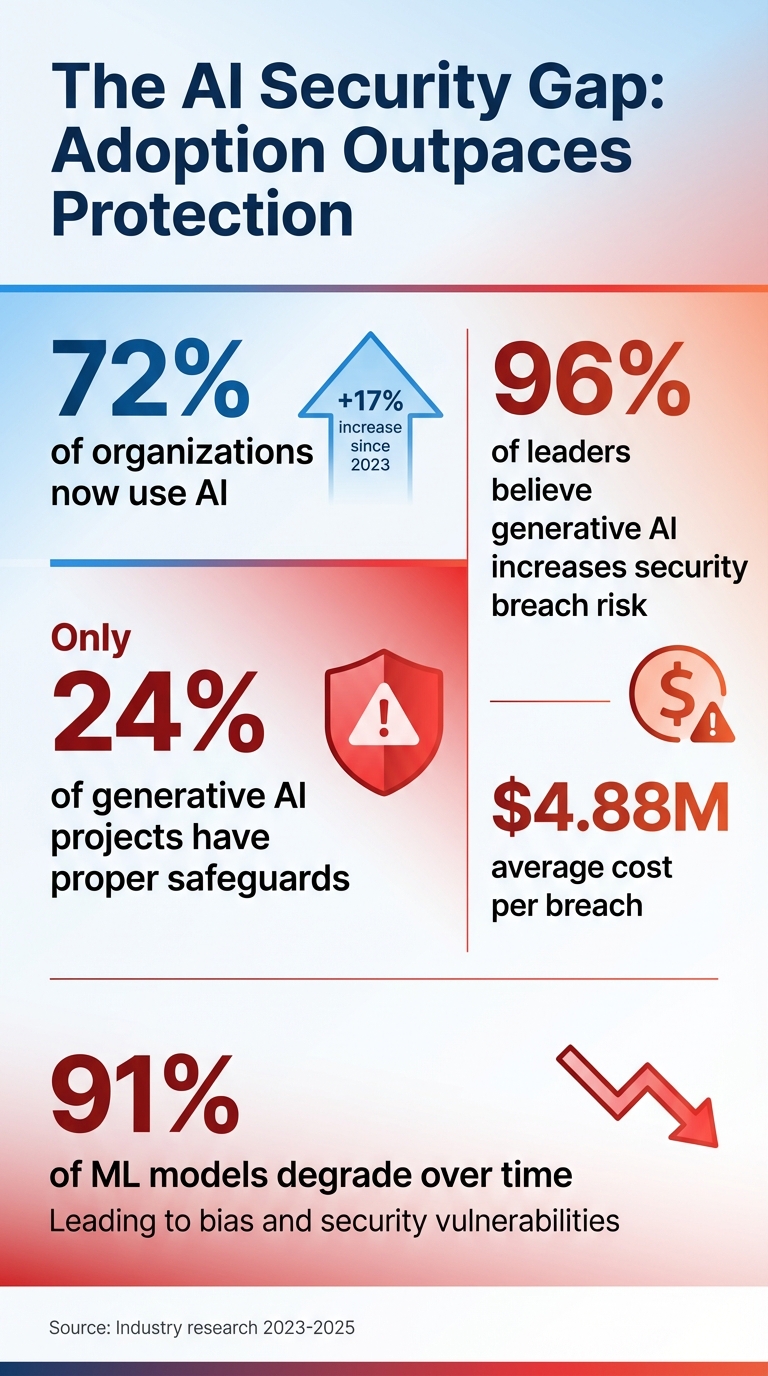

AI adoption is growing rapidly, but security measures are lagging behind. While 72% of organizations now use AI - a 17% increase since 2023 - only 24% of generative AI projects have proper safeguards in place. This gap is alarming, especially as 96% of leaders believe generative AI increases the risk of security breaches, with an average cost of $4.88 million per breach.

Key takeaways from the article:

- AI model drift is a major challenge: 91% of machine learning models degrade over time, leading to bias and security vulnerabilities.

- Stakeholder collaboration is critical for identifying risks early. Developers, users, and ethicists bring diverse perspectives to uncover potential issues.

- Steps to manage AI risks include:

- Mapping stakeholders and AI systems.

- Collecting feedback through surveys, interviews, and sentiment analysis.

- Cataloging and prioritizing risks using tools like risk registers and heat maps.

- Implementing tailored mitigation strategies with clear accountability.

- Continuously monitoring systems through dashboards and automated tools.

Organizations that shift from reactive to early risk detection save millions and build trust by preventing issues before they escalate. By integrating stakeholder feedback into risk management, companies can ensure AI systems remain secure, reliable, and compliant throughout their lifecycle.

AI Adoption vs Security Gap: Key Statistics on AI Risk Management

Mastering AI Risk: NIST’s Risk Management Framework Explained

Step 1: Map Your Stakeholders and AI Systems

Start by identifying all your AI systems and the stakeholders connected to them. Build a detailed inventory of every AI application in use, and pinpoint everyone who is impacted by these systems.

For each system, clearly document its purpose, possible misuse scenarios, operational limits, and dependencies. For example, if you’re working with a hiring algorithm, note that its goal is to assist in screening candidates, but it could be misused to unfairly filter applicants. Also, highlight its integration constraints and reliance on historical data. This level of detail helps you spot potential failure points and identify who needs to be involved to address these risks effectively. This mapping process lays the groundwork for gathering meaningful input from stakeholders in the next steps.

Categorizing Stakeholders

Stakeholders generally fall into five groups: strategic governance, assurance and compliance, technical teams, business users, and external parties. Managing AI risks is a shared responsibility - no single group has a complete view of all potential issues. As Chris Fong aptly puts it:

"If stakeholder engagement is not recorded, it effectively didn't happen".

Why Stakeholder Mapping Matters

Including a variety of perspectives in your mapping process is essential to avoid what is often referred to as "accuracy monoculture." This happens when teams optimize for a single metric, such as precision or recall, while ignoring broader risks like fairness, privacy, or compliance. For instance, if only technical teams evaluate an AI system, they might miss critical issues that legal or ethics teams would catch.

AI systems often impact people who never directly interact with them. Think about job applicants screened out by an algorithm or community members affected by risk classifications. Failing to identify these external stakeholders can lead to blind spots that only become apparent once the system is in use. Consider this: while 77% of employees use generative AI at work, only 28% of organizations have clear usage policies. Additionally, data breaches involving "Shadow AI" cost an average of $670,000 more than regular breaches. These statistics highlight the risks of not mapping all affected parties.

Tools for Stakeholder Mapping

One effective tool for organizing roles and responsibilities is the RACI matrix, which stands for:

- Responsible: Executes the tasks.

- Accountable: Owns the outcome and makes final decisions.

- Consulted: Provides expert input that shapes decisions.

- Informed: Needs to stay updated but doesn’t influence decisions.

Here’s how you can apply RACI to AI risk management: assign only one Accountable person per AI system to prevent delays. For example, in a high-risk credit scoring model, the product owner might be Accountable, data scientists would be Responsible for building the model, legal and compliance teams would be Consulted for regulatory guidance, and executives would be Informed about deployment decisions. Limiting the number of Consulted roles is key to avoiding bottlenecks.

Within the first 15 days, define who is responsible for AI risks and establish a governance framework. Then, during days 16–35, focus on inventorying your AI systems and mapping out your stakeholders.

| RACI Role | Definition | Typical AI Stakeholders |

|---|---|---|

| Responsible | Executes tasks or does the actual work | Developers, Engineers, Data Scientists |

| Accountable | Owns the outcome and makes final calls | Product Owners, AI Risk Owners |

| Consulted | Provides expert advice that shapes decisions | Legal, Compliance, Security Teams |

| Informed | Stays updated but doesn’t influence outcomes | Executives, Support Teams |

If you’re looking for expert help in aligning your AI systems with these stakeholder roles, NAITIVE AI Consulting Agency (https://naitive.cloud) offers specialized services to integrate these best practices into your risk management strategy.

Step 2: Gather Stakeholder Feedback

Once you’ve mapped out your stakeholders and AI systems, the next step is to actively collect feedback. This process helps uncover risks that standard technical metrics might miss, such as ethical concerns, inefficiencies, or trust issues. By using a mix of feedback methods, you can capture both structured data and open-ended insights to better understand not just what is happening, but also why it’s happening. This step builds on the stakeholder mapping process to ensure every perspective is included in identifying potential risks.

Using Surveys and Interviews

Structured surveys are a great option when you need to gather input from a large group of stakeholders or want to monitor changes over time. These surveys can include rating scales for measurable data and open-ended questions for deeper insights. For example, a stakeholder might rate an AI tool "4 out of 5" but also raise privacy concerns in their comments - something that wouldn’t stand out by looking at the score alone.

For more complex or nuanced issues, interviews and focus groups are invaluable. One-on-one interviews allow for detailed discussions with key individuals, such as compliance officers or department heads who work closely with AI systems. Focus groups, on the other hand, encourage collective discussions, revealing shared concerns about fairness, bias, or reliability that might not surface in individual conversations.

Don’t underestimate the value of informal channels for feedback. In July 2025, Microsoft’s People Science team surveyed 1,800 employees globally and found that informal conversations and team meetings were the most commonly used feedback channels across industries. While 66% of employees feel they have enough opportunities to share AI-related feedback, this drops to 52% among individual contributors. Additionally, 34% of employees report they still lack avenues to provide input on their AI experiences. To address this, consider tools like pulse surveys - short, frequent check-ins that fit into busy schedules - and in-product feedback tools that capture input at the exact moment an issue arises. Notably, high-frequency AI users are much more likely to receive weekly in-product feedback requests (53%) compared to low-frequency users (23%).

Using AI for Sentiment Analysis

While surveys and interviews gather direct feedback, AI-powered sentiment analysis can quickly process large amounts of unstructured data, such as open-ended survey responses, emails, or meeting notes. What might take weeks to analyze manually can be done in minutes with these tools. They can tag issues, extract sentiment, and identify recurring themes, helping you pinpoint emerging risks faster.

One particularly useful feature is detecting "score-sentiment mismatches." This happens when someone gives a high numerical rating but expresses concerns in their written feedback. For instance, a stakeholder might rate an AI hiring tool "8 out of 10" but mention that it seems to exclude qualified candidates from certain backgrounds, signaling a potential bias issue.

Here’s an example: A job training nonprofit used AI-powered tools to analyze feedback from 200 participants. By linking participant IDs across applications, surveys, and check-ins, they reduced their analysis time from six weeks to just four minutes. This allowed them to make real-time adjustments based on trends in participant confidence, rather than waiting until the end of the program to identify problems.

To get the most out of sentiment analysis, always include at least one open-ended "why" question in your surveys. Additionally, using persistent stakeholder IDs can help track changes in sentiment over time, allowing you to identify patterns like declining trust or increasing frustration before they become major issues. Many organizations waste 60–80% of their time cleaning fragmented stakeholder data instead of analyzing it, so adopting a "clean-at-source" system that validates data as it’s collected can save significant effort.

It’s also worth noting that 91% of machine learning models experience performance drift within a few years of deployment. Continuous feedback loops are crucial for spotting early signs of this drift. AI sentiment analysis can reveal when stakeholders start reporting unexpected behaviors or outcomes, signaling that the model may no longer be functioning as intended.

At NAITIVE AI Consulting Agency, we stress the importance of incorporating diverse stakeholder feedback into your AI risk management process. Doing so keeps your systems adaptable and ensures you stay ahead of potential risks.

Step 3: Assess and Prioritize Identified Risks

This step focuses on turning stakeholder feedback into a structured approach for identifying and prioritizing risks. Without a clear system, teams can end up wasting time on minor issues while missing critical threats. By organizing feedback into a structured risk catalog, you can create a practical plan for managing risks effectively.

Catalog Risks with Stakeholder Input

The first step is to build a central AI risk register - a document that consolidates all identified risks in one place. Think of it as your early warning system that not only keeps risks visible but also demonstrates diligence to regulators. Interestingly, while only 31% of public sector organizations currently maintain AI-specific risk registers, 60% of S&P 500 companies recognize the risks associated with rapid AI adoption.

Each risk in the register should include:

- A unique ID for easy tracking.

- A clear description (e.g., "Model may produce racially biased outputs").

- The associated AI application (e.g., "ChatGPT-4 for marketing blog posts").

- Its origin or source.

Group risks into categories like performance, fairness, privacy, security, regulatory compliance, and ethical concerns. Assign a specific owner to each risk - whether it's your CISO, Data Science lead, or a Business Manager - so someone is always accountable for managing it.

To ensure no risks slip through the cracks, use Natural Language Processing (NLP) to scan emails, reports, and meeting notes for concerns that might otherwise be overlooked. Additionally, workshops with both technical teams and business users can help uncover risks across all areas where AI has an impact.

Once you’ve cataloged the risks, the next step is prioritization.

Use Predictive Analytics for Prioritization

With a complete risk register in place, shift your focus to prioritizing these risks using data-driven techniques. Start by ranking risks based on their likelihood and potential impact using a simple formula:

AI Risk = (Likelihood) x (Potential Effect).

Calculate both Inherent Risk (the risk before controls are applied) and Residual Risk (the risk after controls are applied). Tools like a 5x5 risk heat map and scenario modeling can help identify high-priority risks quickly. For example:

- Rate each risk on a scale of 1–5 for likelihood (Rare to Almost Certain).

- Rate impact on a scale of 1–5 (Minor to Critical).

A risk scoring 20 (e.g., 4 for likelihood × 5 for impact) would land in the "Critical" zone, signaling the need for immediate action - possibly even shutting down a system.

Machine learning can further enhance prioritization by analyzing historical project data to spot patterns, such as budget overruns or delays. Real-time risk scoring systems can also flag emerging issues like anomalies or unexpected actions during runtime, eliminating the need to rely solely on periodic reviews. This approach ensures risks are continuously monitored and addressed in real time.

While predictive analytics is powerful, it’s crucial to combine it with human judgment for critical decisions. Regularly updating your models with fresh data from continuous monitoring will help address any shifts in data patterns or model performance over time.

At NAITIVE AI Consulting Agency, we specialize in helping organizations implement these prioritization frameworks so resources are focused on the risks that matter most.

Step 4: Develop and Implement Risk Mitigation Strategies

After identifying and prioritizing your risks, the next logical step is to turn stakeholder feedback into actionable plans. This involves creating safeguards that directly address the concerns of those deeply familiar with your AI systems - whether it’s the security team focused on preventing data leaks or the compliance officer monitoring regulatory obligations. The aim here is clear: stop risks from escalating and keep your systems secure and reliable.

Incorporate Stakeholder Insights into Mitigation Plans

Your RACI matrix is a valuable tool for assigning responsibilities to manage each identified risk. For high-stakes projects like AI applications in healthcare or law enforcement, consider forming an Ethics Review Board. This board can evaluate how applications align with your company’s ethical standards before they are deployed. By involving teams from AppSec, engineering, IT, and compliance, you ensure that mitigation strategies balance operational needs with regulatory demands.

Customize your mitigation strategies to leverage the expertise of specific stakeholders. For example:

- If your data science team identifies model drift as a concern, set up controlled retraining pipelines that use monitoring data and team feedback to keep models accurate.

- If your security team flags potential data exposure risks, implement least-privilege access protocols to limit AI’s permissions and data usage.

It’s all about balancing the likelihood of risks with the cost of addressing them to achieve effective mitigation.

The stakes are high. AI-related data breaches cost organizations an average of $4.88 million, while failing to document risk assessments under the EU AI Act could lead to fines as steep as 7% of global turnover. Alarmingly, only 24% of generative AI projects currently have adequate security measures in place. Nizar Hneini, Senior Partner at Roland Berger, underscores this urgency:

"Risk mitigation isn't a cost of innovation - it's the foundation that allows innovation to endure."

Beyond creating plans, ongoing oversight is crucial to ensure these safeguards remain effective during deployment.

Set Up Automated Monitoring Systems

Automated monitoring is a must, especially since 91% of machine learning models experience drift within a few years of deployment. These systems provide real-time oversight, flagging anomalies and either pausing execution or escalating the issue for human review. Tools like LLM firewalls can catch harmful instructions or unsafe outputs as they occur, while statistical divergence measures help identify shifts between live inputs and training data before performance suffers.

Set thresholds for AI prediction confidence, and trigger human-in-the-loop reviews when those thresholds are crossed. For high-risk applications, implement automated guardrails, rollback mechanisms, and Key Risk Indicators (KRIs) to notify teams when it’s time to reassess risks due to changes in technology or regulations. Palavalli, a data security expert from Forcepoint, highlights the importance of this approach:

"We really need a system that continuously analyzes behavior, automatically applies adaptive risk scoring and enforcement and protects data throughout its entire life cycle – from discovery and classification to lineage and governance, all the way through to detection and remediation."

To enhance traceability, ensure your monitoring systems log all inputs, outputs, and features. This creates a reliable record for forensic reviews in case of failures. At NAITIVE AI Consulting Agency, we specialize in integrating these automated monitoring systems into existing Enterprise Governance, Risk, and Compliance (GRC) programs, ensuring AI oversight becomes a seamless part of your organization’s broader risk management framework.

Step 5: Monitor Continuously and Improve Over Time

Risk management isn’t a one-and-done effort - it’s an ongoing process. As models evolve, regulations shift, and priorities change, continuous oversight becomes critical. Automated monitoring and risk mitigation are great starting points, but without regular checks and stakeholder input, even the best plans can become outdated. Staying vigilant ensures your risk management strategies remain effective and aligned with your goals.

Real-Time Dashboards and Reporting

Real-time dashboards are like a pulse check for your AI systems. Unlike static reports that arrive after the fact, these dashboards provide live updates on key metrics, such as spikes in incidents or unusual behaviors. They allow leadership to shift their focus from "What went wrong?" to "What’s our next move?".

These dashboards monitor critical risk indicators - like sudden changes in user activity, transaction volumes, policy overrides, or context drift - so you can act before small issues snowball. For autonomous AI agents, runtime monitoring is especially important. This is particularly true when building multi-agent research teams that require coordinated oversight. Madhulika Srikumar from the Partnership on AI explains:

"Real-time failure detection is the use of automated monitoring systems that track agent behavior as it unfolds, flag anomalies, and either halt execution or escalate to human oversight."

Tools like Vertex AI can handle automated performance evaluations, while BigQuery acts as a centralized hub for tracking trends and analyzing risks over time using SQL queries. For organizations relying on autonomous agents, platforms like Noma Security provide a unified view of agents, applications, and models, complete with automated policy enforcement and risk scoring.

However, it’s worth noting that monitoring systems can be costly, sometimes rivaling the expense of deploying the AI agents themselves. To avoid "alert fatigue", fine-tune your alerts to focus on critical issues while filtering out noise.

Dashboards are invaluable for operational insights, but they’re just one piece of the puzzle. Regular stakeholder reviews are essential for maintaining strategic alignment with emerging risks.

Conduct Regular Stakeholder Reviews

In the fast-paced world of technology, risks can evolve overnight. That’s why periodic reviews with stakeholders are a must. These reviews should bring together cross-functional teams - like AppSec, engineering, IT, compliance, and business units - to ensure your strategies address both operational realities and regulatory demands.

Effective feedback loops go beyond dashboards. They incorporate internal signals (e.g., employee feedback, incident logs, and user activity) and external cues (e.g., regulatory updates and industry shifts) to catch blind spots. This is especially important in a landscape where 85% of business leaders say compliance rules have grown more complex in the last three years, and 61% of compliance professionals rank "keeping up with regulatory change" as their top concern.

Richard Chambers, Senior Advisor for Risk and Audit at Optro, highlights the importance of embedding risk awareness into daily routines:

"Building risk awareness into everyday workflows closes the distance between information and response."

Start by focusing on one or two high-priority risks and gradually expand. Establish clear routines and assign responsibilities so every stakeholder knows their role when an alert arises. Encourage open communication and reward early warnings to create a culture that prioritizes risk awareness.

At NAITIVE AI Consulting Agency, we specialize in helping organizations implement these continuous monitoring frameworks and organize regular stakeholder reviews. This ensures your AI risk management evolves alongside your technology and business objectives.

Reactive vs. Early AI Risk Detection

Reactive detection waits for problems to surface, while early detection focuses on identifying and addressing potential risks before they escalate. This shift in timing and collaboration fits perfectly with a stakeholder-focused approach to managing AI risks.

Reactive methods depend on addressing issues after they've already occurred, which can be particularly risky when dealing with autonomous systems capable of performing chained actions. For example, when autonomous agents execute tasks like API calls or financial transactions, waiting for a failure to happen can lead to inefficiencies and even serious dangers. As Madhulika Srikumar from the Partnership on AI points out:

"Because these systems act directly in the environment, failures to meet user goals can result in financial loss, safety risks, or breakdowns in critical processes."

On the other hand, early detection leverages tools like predictive analytics, real-time dashboards, and runtime guardrails to catch issues before they cause harm. This proactive approach also includes input from cross-functional teams - such as AppSec, engineering, IT, and compliance - ensuring that risk assessments account for a wide range of operational priorities, not just technical performance. The table below highlights the key differences between reactive and proactive detection methods.

Comparison Table

| Feature | Reactive Detection | Early (Proactive) Detection |

|---|---|---|

| Timing | Post-incident or periodic reviews | Real-time monitoring during execution |

| Stakeholder Role | Minimal; involved after a failure occurs | Collaborative; active input on stakes and impact during design |

| Primary Tools | Manual audits, incident logs, retrospective reports | Automated monitoring, predictive analytics, runtime guardrails |

| Focus | Static model performance (e.g., bias, drift) | Dynamic behavior and autonomous action chains |

| Risk Impact | High; failures can cascade before being caught | Lower; issues can be halted or escalated early |

| Implementation | Easier to implement initially | Resource-intensive; requires technical research and cross-functional alignment |

Shifting from reactive to early detection isn't just about upgrading technology - it's about fostering a mindset of prevention. By adopting early detection strategies, organizations can minimize financial risks, strengthen relationships with regulators, and pave the way for responsible AI development.

Conclusion

Addressing AI risks isn't just about tackling technical issues - it requires active involvement from the right stakeholders. By identifying key players, incorporating their feedback, jointly assessing risks, implementing strategies to mitigate them, and maintaining ongoing monitoring, organizations can establish systems that identify problems before they escalate. Current data on risk management highlights a concerning gap: while many organizations acknowledge AI security vulnerabilities, only a small percentage have put sufficient safeguards in place.

By following the steps outlined earlier - stakeholder mapping, feedback collection, risk assessment, and mitigation - organizations can turn risk management into a comprehensive, organization-wide effort. Including voices from executives, developers, data scientists, users, policymakers, and ethicists ensures a broader perspective, reducing blind spots that purely technical processes might overlook. As Shariful Haque from the Department of Business Administration at International American University explains:

"The key to successful implementation is the culture of experimentation, compliance with strong governance principles... and ethical transparency as the most effective way to align stakeholders in the long term".

This collaborative approach fosters the trust needed for AI adoption, especially in a landscape where only 18% of organizations have a council or board empowered to make decisions on responsible AI governance.

The numbers highlight the benefits of taking a proactive stance. Organizations that shift from reactive to proactive risk detection not only avoid costly breaches - potentially saving millions per incident - but also gain the confidence to deploy AI systems securely. Statistics show that businesses with advanced AI security measures save an average of $1.9 million per breach compared to those without such controls.

Start small, involve stakeholders early, and gradually expand your detection capabilities. These steps lay the foundation for a thorough risk management system that protects AI systems throughout their lifecycle. The ultimate aim is to create AI systems that serve your organization's goals while maintaining the accountability and transparency demanded by regulators, customers, and employees.

To explore more about integrating stakeholder feedback into your AI risk management strategy and access the full framework discussed in this guide, visit NAITIVE AI Consulting Agency at https://naitive.cloud.

FAQs

How do I find all the AI systems we’re already using (including Shadow AI)?

To get a full picture of the AI systems in your organization, including any Shadow AI (unsanctioned tools), you need to carry out an AI discovery process. This means cataloging everything: AI applications, user accounts, integrations, and activity across your SaaS environment.

By assessing both approved and unapproved AI tools, you can uncover hidden risks, manage them effectively, and ensure stakeholders are involved in the process. Visibility is key to maintaining control and making informed decisions.

Which stakeholders should be involved in AI risk reviews, and who owns decisions?

AI risk reviews should bring together a wide range of voices, including policymakers, impacted communities, and organizational leaders. While input from these groups is crucial, the ultimate responsibility for decision-making often falls to those in leadership positions - whether within organizations or governing bodies - depending on the situation.

What should we monitor in production to catch model drift and unsafe behavior early?

To stay ahead of potential issues like model drift or unsafe behavior, it's crucial to keep an eye on a few key areas: model performance metrics, stakeholder feedback, and operational data. Regularly monitoring these factors allows you to spot risks early and make sure your AI system continues to perform as expected.