Vector Databases in Distributed Knowledge Base Architectures

Vector databases power scalable semantic knowledge bases but require careful sharding, indexing, and security to perform at enterprise scale.

Vector databases are transforming how we handle unstructured data like text, images, and audio. Unlike traditional databases, they use high-dimensional embeddings to enable semantic searches - retrieving results based on meaning rather than exact keywords. For example, searching for "sneakers" might also bring up "running shoes." However, scaling these systems to billions of vectors presents challenges, from memory demands to maintaining low latency.

Key takeaways:

- What they are: Vector databases store embeddings (numerical arrays) that represent the meaning of data, making them ideal for AI applications like Retrieval-Augmented Generation (RAG) vs. finetuning.

- Core challenges: Scaling requires intelligent sharding, replication, and separation of compute and storage to handle billions of vectors efficiently.

- Performance strategies: Techniques like Approximate Nearest Neighbor (ANN) algorithms (e.g., HNSW) and hybrid search methods balance speed, accuracy, and cost.

- Enterprise use: They integrate with AI workflows, ensure data security, and meet latency requirements (e.g., 5–30ms for RAG pipelines).

Vector Databases and Their Core Components

What Are Vector Databases?

Vector databases are designed to store, index, and retrieve high-dimensional embeddings that capture the deeper meaning of unstructured data like text, images, and audio. Unlike traditional relational databases, which rely on rigid tables, vector databases handle numerical arrays where each element represents a specific semantic feature. This is especially important given that around 80% of today’s data is unstructured, making it tough to manage with conventional systems.

These databases work by converting raw data into embeddings, which are the foundation for context-aware retrieval in RAG (Retrieval-Augmented Generation) pipelines. Embeddings represent data numerically, clustering semantically similar items in a multi-dimensional space. For example, a 384-dimensional vector stored as a 32-bit float requires about 1.5 KB of storage. Meanwhile, Azure OpenAI models generate embeddings with 1,536 dimensions to capture even more nuanced relationships.

Now, let’s dive into the core components that make these systems function.

Core Components of Vector Databases

To understand how vector databases enable fast, semantic searches, it’s helpful to look at their internal structure. Each database entry includes:

- Vector ID: A unique identifier.

- Numerical vector: Captures semantic features of the data.

- Original content payload: The raw data being represented.

- Metadata: Additional details like author, timestamp, or category.

The cornerstone of these databases is their indexing structure, which organizes the vector space using Approximate Nearest Neighbor (ANN) algorithms. As one research paper puts it:

The indexing structure is often considered the 'core' element of the vector database.

Algorithms like Hierarchical Navigable Small World (HNSW) make quick retrieval possible without resorting to brute-force searches across millions of vectors. Efficient indexing is critical - without it, adding just one vector field to an index of 100 million documents could increase storage needs by up to seven times.

Benefits of Vector Databases in Knowledge Bases

Thanks to their unique components, vector databases bring powerful advantages to distributed knowledge bases. They excel at semantic search, which goes beyond basic keyword matching. Instead of looking for exact matches, these databases retrieve conceptually related results. For instance, a query for "sneakers" could return results for "running shoes", even though the terms differ.

This semantic approach is especially useful in distributed knowledge bases. Vector databases naturally handle multilingual queries because embeddings focus on meaning rather than specific words. They’re also built to manage billions of vectors while maintaining the speed needed for real-time applications.

However, there’s a catch: embeddings created by one model aren’t compatible with those from another. This means careful model selection is essential for distributed systems. As Elastic explains:

Embeddings created by one provider's model cannot be understood by another; for example, an embedding from an OpenAI model is not compatible with one from another provider.

LLM and Vector Databases: Concepts, Architectures, and Examples - Sam Partee

Distributed Architecture Patterns for Vector Databases

When scaling vector databases in distributed knowledge bases, the architectural approach plays a huge role in handling growth and failures. Two primary patterns dominate this space: Shared-Nothing and Shared-Storage. Each comes with its own set of strengths and compromises.

In a Shared-Nothing setup, every node operates independently, managing its own data and computations. Systems like Qdrant, Weaviate, and Elasticsearch are built on this model. While this independence simplifies certain operations, scaling often means physically redistributing data across nodes. This process can be both time-intensive and disruptive. Despite this, additional refinements in fault tolerance and scalability are often layered onto these systems to boost efficiency.

On the other hand, Shared-Storage architectures separate computation from storage. Here, compute nodes act as stateless workers, relying on durable storage solutions like Amazon S3 to manage data. Systems like Pinecone Serverless and Milvus embrace this pattern, enabling independent scaling of compute and storage. If a compute node fails, it’s relatively simple to spin up another one that can access the data from persistent storage. To further optimize performance, some systems adopt distributed designs to address potential bottlenecks. For instance, coordinator/worker models assign query planning and result aggregation to a central coordinator while worker nodes handle local shards. Alternatively, Peer-to-Peer (P2P) designs utilize gossip protocols or distributed hash tables, removing single points of failure.

The choice between these architectures depends on the specific requirements of your system. As researcher Aakash Sharan aptly puts it:

Dense search is a distributed systems problem disguised as a retrieval problem. And ultimately, sharding and routing are your primary defense against spiraling infrastructure costs.

For particularly large datasets, strategies like content-based sharding or serverless architectures can help maintain low latency while keeping infrastructure costs under control.

Scalability and Performance Optimization

Once you've settled on an architectural pattern with the help of AI consulting, the next hurdle is ensuring your vector database can handle massive scale efficiently while meeting strict latency requirements. Scaling strategies play a key role here, directly affecting both performance and cost - especially when your dataset outgrows the capacity of a single machine. Let’s dive into some core strategies to achieve efficient scaling and maintain performance.

Horizontal Scaling and Sharding

When working with large datasets, sharding becomes essential to distribute the memory load effectively. By splitting data into smaller subsets, or shards, and distributing them across multiple nodes, the system can handle larger datasets more efficiently.

The way you implement sharding has a direct impact on system performance. Random (or hash-based) sharding, for instance, uses a hash of vector IDs to distribute data evenly across shards. While this ensures balanced loads, it also requires scatter-gather queries, where latency hinges on the slowest shard. On the other hand, content-based sharding groups semantically similar vectors into the same shard using clustering algorithms like k-means. This approach allows for query pruning, where only relevant shards are searched, reducing CPU and network strain [4, 8].

For multi-tenant SaaS applications, metadata-based sharding offers another layer of optimization. Partitioning data by fields such as tenant_id or region isolates tenant data and limits queries to specific shards, avoiding cluster-wide broadcasts. Additionally, techniques like quantization can shrink memory usage by up to 30×, making it possible to store larger datasets on more affordable hardware.

Replication and Fault Tolerance

Replication is crucial for boosting query throughput and maintaining availability. By storing multiple copies of shards across nodes, the system can handle more concurrent queries as the replicas share the workload. Increasing the replication factor often results in a near-linear improvement in queries per second (QPS). Replication also ensures the system remains operational even if individual nodes fail, adding a layer of reliability to sharding strategies.

Most distributed vector databases rely on the Raft consensus algorithm to manage metadata and cluster topology, ensuring strong consistency for critical system information [4, 24]. For vector data, many systems offer tunable consistency levels - such as ONE, QUORUM, or ALL - allowing you to balance search accuracy with latency and availability. For high-availability, mission-critical setups, a replication factor of at least 3 is generally recommended.

Separation of Compute and Storage

Cloud-native architectures often separate compute from storage, allowing each to scale independently. Data is stored in durable object storage like Amazon S3, while stateless compute nodes retrieve and cache index segments as needed [4, 22]. As Milvus documentation highlights:

Worker nodes are stateless thanks to separation of storage and computation, and can facilitate system scale-out and disaster recovery when deployed on Kubernetes.

This design also brings cost efficiencies. Instead of relying on expensive local SSDs for all data, you can tier your storage: keep "hot" data (like centroids and routing tables) in RAM for instant access, "warm" data (compressed partitions) on SSDs, and "cold" historical embeddings in economical object storage. For real-time applications like RAG pipelines, where end-to-end latency must stay within 100–150 ms, vector search operations are usually allocated just 5–30 ms.

Indexing and Querying Techniques

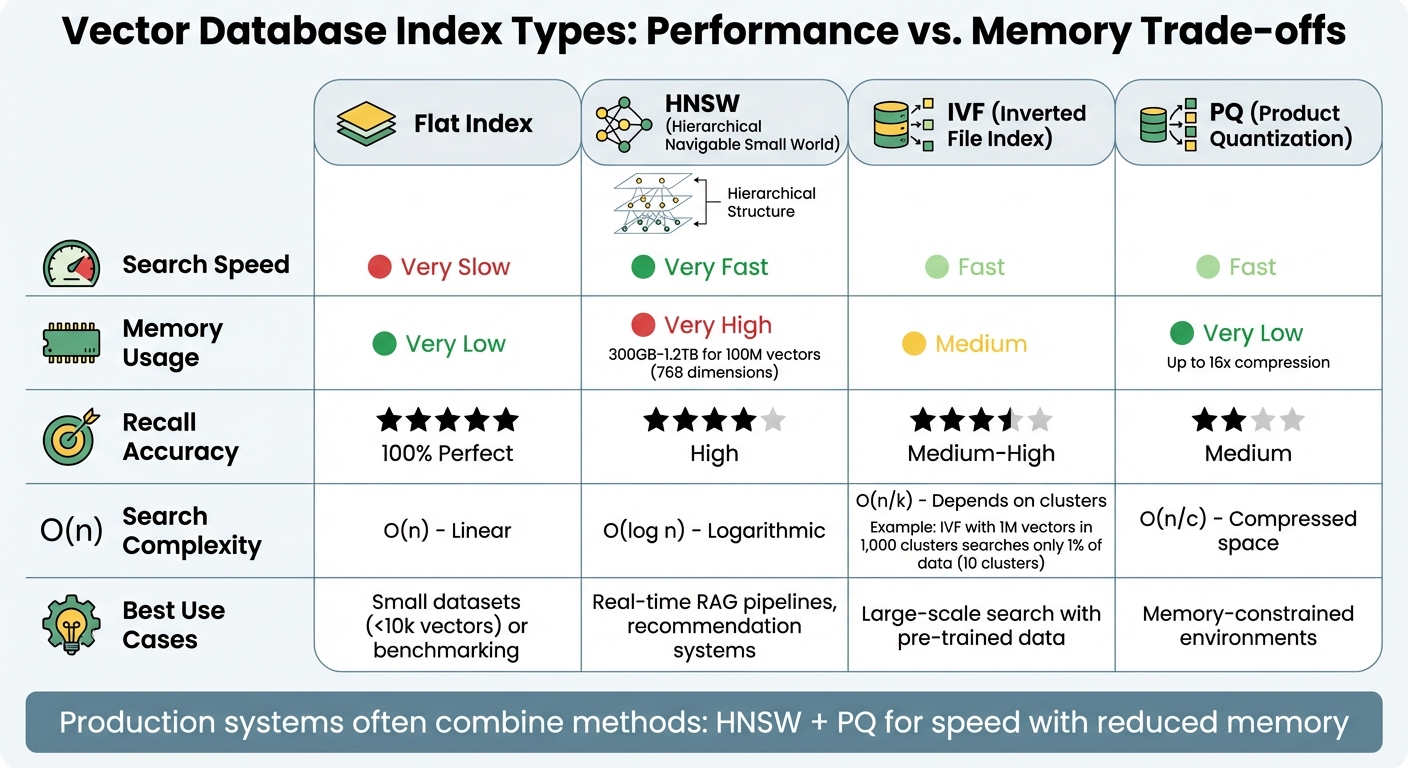

Vector Database Index Types Comparison: Performance, Memory, and Use Cases

In distributed knowledge base systems, managing billions of vectors efficiently hinges on precise indexing and querying methods. Indexing organizes data for quick retrieval, while querying translates user inputs into mathematical operations to locate the closest matches. These processes are the backbone of distributed systems that deal with massive datasets.

Indexing Algorithms

Indexing algorithms balance speed, memory usage, and accuracy differently. One of the most popular methods is Hierarchical Navigable Small World (HNSW), a graph-based approach that organizes vectors in layers. The top layers are sparse for quick navigation, while the dense bottom layer ensures precision. As Michael Brenndoerfer explains:

HNSW combines two powerful ideas: the 'small world' property of certain graphs... and a hierarchical layering scheme inspired by skip lists.

HNSW offers logarithmic search time complexity ($O(\log n)$), making it incredibly fast. However, it comes with high memory demands. For instance, indexing 100 million vectors with 768 dimensions can require anywhere from 300GB to 1.2TB of memory just for graph nodes and edges. To mitigate this, Product Quantization (PQ) is often used alongside HNSW. PQ compresses vectors by breaking them into sub-vectors and representing them with short codes, cutting memory usage by up to 16x.

Another approach, the Inverted File Index (IVF), partitions the vector space into clusters using k-means. During a query, only the most relevant clusters are searched. For example, in a dataset with one million vectors divided into 1,000 clusters, querying just 10 clusters means searching only 1% of the data. However, IVF requires a training phase to define cluster centroids, which can complicate things in dynamic environments where data is constantly updated.

For smaller datasets or isolated data in multi-tenant systems, a Flat Index performs exhaustive searches with perfect recall. While it scales linearly ($O(n)$) and becomes impractical for large datasets, some systems like Weaviate start with a flat index and automatically transition to HNSW as collections grow beyond 10,000 objects.

| Index Type | Search Speed | Memory | Recall | Use Case |

|---|---|---|---|---|

| Flat | Very Slow | Very Low | 100% (Perfect) | Small datasets (<10k) or benchmarking |

| HNSW | Very Fast | Very High | High | Real-time RAG, recommendation systems |

| IVF | Fast | Medium | Medium-High | Large-scale search with pre-trained data |

| PQ | Fast | Very Low | Medium | Memory-constrained environments |

Once indexing is set up, the next step is ensuring user queries are processed effectively for accurate results.

Querying and Similarity Matching

With efficient indexing in place, query processing becomes a streamlined operation. User inputs are converted into high-dimensional embeddings using the same model used for indexing. The database then calculates distances between the query vector and indexed vectors using metrics like Cosine Similarity (measuring the angle between vectors) or Euclidean Distance (measuring straight-line distance). The objective is to identify the "nearest neighbors" - the most similar vectors.

Approximate Nearest Neighbor (ANN) algorithms enable lightning-fast searches, returning results in milliseconds even for datasets with billions of vectors. In HNSW, the ef_search parameter adjusts the balance between speed and recall. Higher values increase the search depth, improving recall but slightly slowing down retrieval. This fine-tuning is crucial in distributed systems where latency is a priority.

Many systems employ a two-stage retrieval process. The first stage uses vector search to quickly retrieve 50–200 candidates. In the second stage, a more computationally expensive cross-encoder or large language model (LLM) reranks the top results for greater accuracy. Paweł Brzuszkiewicz from the PractiqAI Team emphasizes this point:

A vector database is infrastructure for meaning. Treat it with the same rigor you treat your transactional database - schema design, indexing, operations - and it will quietly power your AI systems.

Hybrid search is also gaining traction. This method combines dense vector search for semantic understanding with sparse keyword search (like BM25) for exact matches, such as error codes or product IDs. This dual approach ensures the knowledge base can handle both natural language queries and precise technical requests.

Enterprise Implementation Considerations

Rolling out vector databases on a large scale involves more than just setting up the technology. Organizations need to tackle critical issues like security, system reliability during failures, and smooth integration with AI workflows. These factors determine whether a distributed knowledge base becomes a valuable asset or a potential risk.

Data Security and Access Control

Vector embeddings can unintentionally expose sensitive information. For instance, embedding inversion attacks allow attackers to reconstruct private data - such as medical records or legal documents - straight from the vectors themselves. This makes security a top priority.

Static API keys, while common, come with a major flaw: if compromised, they grant unrestricted access to all data collections. As the Qdrant team points out:

"The primary challenge with static API keys is their all-or-nothing access, inadequate for role-based data segregation in enterprise applications".

Role-Based Access Control (RBAC) addresses this issue by setting specific permissions for different roles. For example, a billing analyst might have read-only access to financial data, while a marketing team member might only access customer sentiment data.

To further enhance security, JWT-based authentication offers stateless RBAC with features like expiration times and instant revocation through claims such as value_exists. Qdrant 1.9 introduced these features to help large enterprises manage internal data more effectively.

Encryption is another key layer of protection. TLS encryption ensures secure data transmission by blocking Man-in-the-Middle attacks, while Searchable Encryption (SE) allows encrypted data to be queried without decryption. This is especially important for RAG pipelines handling sensitive documents. Cisco Security highlights this:

"Searchable encryption allows users to search encrypted data without decrypting it. This capability is especially useful when data privacy is a concern, such as in cloud storage or vector databases".

For organizations with global operations, compliance with regional regulations like GDPR or HIPAA is non-negotiable. Data sovereignty rules often require that data remain within specific geographic boundaries. Hybrid cloud setups can meet these requirements by centralizing database management while keeping data within the required infrastructure.

Consistency and Fault Recovery

In distributed systems, failures are inevitable. The critical question is how quickly and effectively the system can bounce back. Consensus protocols like Raft ensure that all nodes stay on the same page regarding cluster topology and metadata, preventing "split-brain" scenarios where different parts of the system operate independently.

Modern vector databases offer tunable consistency levels, allowing enterprises to balance speed and accuracy. For RAG applications, session consistency is crucial - users need to search for documents immediately after uploading them.

Systems use automated failure detection to monitor nodes and redirect traffic to healthy replicas when issues arise. Self-healing mechanisms take it a step further, re-replicating data from failed nodes and resolving discrepancies using Merkle trees and background "anti-entropy" processes. Separating storage and compute adds another layer of resilience. Durable object stores like S3 act as the "single source of truth", enabling stateless compute nodes to be replaced without risking data loss.

For high-performance RAG pipelines, latency is critical. Vector search often has only 5–30ms to complete its task, as Aakash Sharan emphasizes:

"Latency is not an optimization problem - it's a survival constraint".

With robust fault recovery in place, enterprises can confidently integrate vector databases into their AI workflows.

Integration with LLMs and RAG Pipelines

Vector databases are the backbone of AI-driven knowledge systems, powering retrieval for applications like RAG pipelines. However, pure vector search may miss exact keyword matches. To address this, production systems often combine dense vector retrieval with sparse keyword methods like BM25 using Reciprocal Rank Fusion (RRF), which can improve retrieval accuracy by 15–30%.

For better accuracy and reduced hallucinations, a two-stage retrieval process is often employed. This approach improves accuracy by 20–35% and enhances multi-hop reasoning by 47%. As Likhon explains:

"The majority of RAG systems fail within 90 days of production deployment... because teams underestimate the engineering complexity required to maintain accuracy, control costs, and meet latency SLAs at scale".

Another challenge is balancing precision with context. Parent-child retrieval solves this by indexing smaller chunks (200–500 tokens) for precise matching while providing larger chunks (1,000–2,000 tokens) to LLMs for better context. Techniques like HyDE (Hypothetical Document Embeddings) generate pseudo-answers to use as search queries, improving recall for complex prompts.

To optimize costs and performance, semantic caching stores results for similar queries, achieving cache hit rates of 60–80% in production systems. This reduces median latency from 150ms to under 20ms at companies like Notion and Intercom. For organizations managing massive datasets, quantization with Float8 achieves 4x compression with minimal quality loss - less than 0.3% degradation - making it a practical choice for reducing memory requirements.

However, Likhon cautions against over-relying on retrieval alone:

"Retrieval is not generation. Even with perfect retrieval, the LLM can still hallucinate, ignore retrieved context, or synthesize answers from parametric knowledge instead of citations".

NAITIVE AI Consulting Agency specializes in creating and managing advanced AI systems, including RAG pipelines and vector database integrations, to deliver enterprise-grade semantic search and knowledge generation workflows.

Conclusion

Vector databases have grown from being experimental tools to becoming a cornerstone for enterprise AI systems. They power semantic search across unstructured data, enhance large language models with real-time information, and provide the contextual memory necessary for autonomous agents. This shift marks a move from static data retrieval to dynamic, context-aware knowledge systems. By 2026, it's projected that over 30% of enterprises will integrate vector databases to support their foundation models - an enormous jump from less than 2% in 2023.

However, moving from pilot projects to full-scale production is no small feat. Around 71% of generative AI pilots fail to progress beyond the testing phase. Success requires specialized expertise in areas like distributed sharding, hybrid retrieval methods, and fine-tuned indexing and replication strategies. For instance, vector search accuracy drops by 12% once a knowledge base surpasses 100,000 pages. Additionally, maintaining query latency under 30 milliseconds while scaling to billions of vectors demands precise optimization of fault recovery mechanisms.

The future promises even more advanced systems, blending vector embeddings with knowledge graphs to create hybrid architectures. Microsoft's GraphRAG research has already shown impressive results, achieving 70–80% improvements in answer depth while cutting token costs by as much as 97% for specific tasks. Gartner emphasizes the urgency for action:

High-tech C-level executives overseeing agentic AI products must recognize that traditional databases aren't suitable for agentic AI and must adopt context-aware data platforms within two years.

NAITIVE AI Consulting Agency specializes in designing and managing these cutting-edge AI systems. From RAG pipelines and vector database integrations to autonomous AI agents, we help turn experimental ideas into production-ready solutions that drive measurable results. Visit naitive.cloud to discover how custom AI strategies can transform your enterprise knowledge systems into a competitive edge.

FAQs

When should I choose shared-nothing vs shared-storage for a vector database?

When deciding on a database architecture, the choice often comes down to shared-nothing or shared-storage, each with its own strengths and trade-offs.

- Shared-Nothing: This setup is ideal for horizontal scalability, fault tolerance, and managing large datasets. By distributing data across multiple nodes without shared components, it minimizes single points of failure. However, it introduces added complexity, especially when it comes to query routing and coordination across nodes.

- Shared-Storage: If simplicity and centralized data management are your priorities, this might be the better fit. It works well for smaller deployments where scaling and performance demands are moderate. That said, as the dataset grows or query loads increase, this approach can struggle with scalability and performance limitations.

Each model serves different needs, so the right choice depends on your specific use case and priorities.

How can I shard vectors without impacting search latency at scale?

To handle vectors efficiently on a large scale, consider using vector-aware sharding. This involves grouping similar vectors within the same shard to cut down on cross-shard searches. For uneven data distribution, hybrid approaches like dynamic shard rebalancing can help balance the load effectively. On top of that, intelligent query routing ensures queries are sent only to the shards that matter, reducing unnecessary delays. Together, these strategies help maintain high performance when working with extensive vector datasets.

What security controls protect sensitive data stored as embeddings?

Sensitive data stored as embeddings is safeguarded using several security measures. These include encryption to protect the data during storage and transmission, access controls to restrict who can view or modify the data, audit logging to track access and changes, and redaction to remove sensitive information where necessary. Additionally, strategies are implemented to counter inversion and inference attacks, further ensuring the security and privacy of the data.