Designing AI Systems for Regulated Industries

Practical guidance for building compliant AI in healthcare, finance, and energy—covering transparency, human oversight, secure logging, encryption, and phased deployment.

AI in regulated industries like healthcare, finance, and energy must meet strict compliance standards while ensuring operational reliability. These systems handle sensitive tasks, from processing patient data to making financial decisions, and operate under laws like HIPAA, GDPR, and the EU AI Act. Non-compliance can lead to fines of up to $1.5M annually under HIPAA or €35M under the EU AI Act.

To build compliant AI systems, organizations must prioritize:

- Transparency: AI decisions must be explainable, with traceable audit logs.

- Human Oversight: High-stakes decisions require expert review and intervention mechanisms.

- Security: Encrypt data, enforce strict access controls, and monitor for vulnerabilities.

A phased implementation approach - starting with decision-support tools and gradually increasing automation - helps ensure compliance and trust. For disaster recovery, AI systems must restore operations without violating data privacy or audit requirements. By embedding these principles, businesses can align AI innovation with regulatory demands.

Episode 261: AI Implementation in Regulated and High-Trust Industries

Regulatory Challenges in AI Disaster Recovery

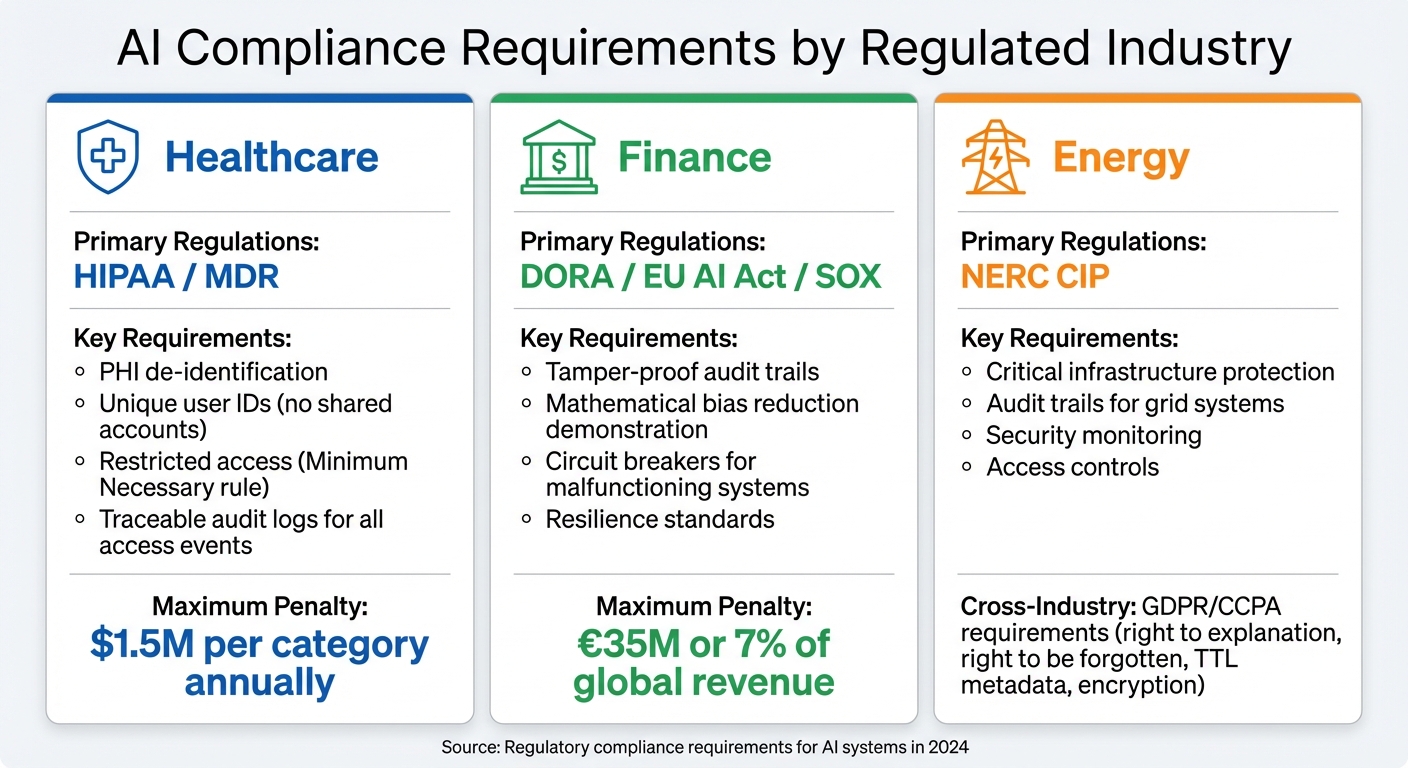

AI Compliance Requirements by Regulated Industry: Healthcare, Finance, and Energy

Compliance Requirements for Regulated Sectors

AI disaster recovery systems operating in regulated industries face strict compliance demands that go well beyond standard IT recovery protocols.

Take healthcare, for example. Regulations like HIPAA require every access event to be tied to a specific individual, banning shared accounts altogether. The "Minimum Necessary" rule further restricts data access to what is absolutely needed. Meanwhile, AI devices must align with MDR safety standards, ensuring that all data access during recovery is fully traceable to individual users.

Finance faces its own set of rigorous standards. DORA, SEC regulations, and SOX require tamper-proof audit trails for algorithmic decisions. High-risk AI applications, such as credit scoring systems, must mathematically demonstrate efforts to reduce bias and incorporate fail-safes like circuit breakers to shut down malfunctioning systems. Regulatory expert Danielle Barbour highlights:

"The compliance obligations that govern employee access to sensitive data apply identically to AI systems accessing that same data. Regulators have not created AI-specific exemptions".

In the energy sector, AI systems must adhere to NERC CIP standards, which focus on protecting critical infrastructure. Additionally, GDPR and CCPA enforce rights like the "right to explanation" and the "right to be forgotten", requiring the use of TTL metadata, encryption, and updated processing records in AI workflows.

| Industry | Primary Regulation | AI Compliance Focus |

|---|---|---|

| Healthcare | HIPAA / MDR | PHI de-identification, unique user IDs, restricted access |

| Finance | DORA / EU AI Act | Resilience, bias reduction, tamper-proof audit logs |

| Energy | NERC CIP | Infrastructure protection, audit trails for grid systems |

These stringent regulations highlight the stakes and set the groundwork for understanding the risks of non-compliance.

Risks of Non-Compliant AI Systems

Failing to meet compliance standards can lead to severe consequences. For instance, HIPAA violations can result in fines of up to $1.5 million per category annually. Similarly, breaches of the EU AI Act carry penalties as high as €35 million or 7% of global revenue.

But the risks go beyond financial penalties. Operational failures are a looming threat, with 70% of AI models experiencing performance declines due to data drift within their first year. Such degradation can lead to recovery failures and improper data restoration.

Another major issue is the use of shared service accounts, which creates gaps in audit trails. This lack of traceability can trigger widespread breach notifications, harming a company’s reputation.

These challenges shed light on why only 53% of AI projects transition successfully from pilot to production. Moreover, 281 out of the Fortune 500 companies flagged AI as a critical risk in their 2024 annual reports. As Jatin Garg, Founder & CTO of GoCodeo, points out:

"Governance and compliance in agentic AI systems is not an afterthought, it is a core software engineering concern".

Design Principles for Compliant AI Systems

In the realm of AI disaster recovery, compliance isn't just a box to check - it's the foundation for building systems that are both reliable and aligned with regulations. To meet these requirements, organizations must look beyond performance metrics like accuracy and efficiency. Instead, they need to focus on trust, transparency, and accountability. This shift from traditional MLOps to a compliance-first approach means embedding compliance into every layer of AI design. It’s not just about how well a system performs but also how fair, transparent, and controllable it is for human operators.

Building Transparent and Explainable AI Models

Transparency and explainability aren't just nice-to-have features - they're often legal mandates. AI systems, especially those influencing critical sectors like healthcare or finance, must be able to explain their decisions clearly. A well-designed compliance framework typically includes four layers:

- Data Ingestion Layer: Tracks data lineage and flags potential bias.

- Model Core: Incorporates tools for explainability.

- Human Oversight Layer: Features built-in circuit-breakers for intervention.

- Immutable Audit Layer: Ensures decisions are fully traceable.

One effective method is using Chain-of-Thought reasoning, which logs intermediate steps in decision-making. This approach not only helps auditors reconstruct decisions but also reduces errors (like hallucinations) by 30%. As Moritz Hain from Sapien puts it:

"The enterprise that engineers explainable reasoning now secures long term compliance resilience".

However, challenges remain. Some advanced models, such as OpenAI o1, don’t fully expose their internal reasoning processes, making comprehensive audits difficult. When this happens, organizations should document these limitations in Architecture Decision Records (ADR) to maintain transparency.

Once transparency is addressed, the next step is implementing robust logging mechanisms to ensure compliance.

Implementing Automated Decision Logging and Audit Trails

Creating reliable and tamper-proof audit trails is critical for compliance. Organizations should adopt append-only logging systems that capture everything from model lifecycle events to decision inputs, outputs, and human interactions. These logs form the backbone of records that auditors can rely on.

But basic logging isn’t enough. Contextual telemetry adds layers of detail, such as infrastructure data, model versions, confidence scores, and input references. This transforms logs into powerful forensic tools capable of standing up to regulatory scrutiny. To make this process even more effective, consider tiered logging:

- Tier 1: Captures basic inputs and outputs.

- Tier 2: Adds reasoning steps and confidence scores.

- Tier 3: Provides full forensic trails with cryptographic protections for high-risk scenarios.

To comply with privacy laws like GDPR, sensitive information like Personally Identifiable Information (PII) should be masked using methods like salted hashing or tokenization. Retention policies are equally important - decision logs should typically be kept for 1–2 years, while PII data should be retained for 6–12 months. Use standardized formats like ISO 8601 timestamps and ensure logs are exportable in both machine-readable (JSON, CSV) and human-readable (PDF) formats to meet diverse auditing needs.

Even with automated systems in place, human oversight is essential for handling complex scenarios.

Embedding Human Oversight in AI Processes

No matter how advanced an AI system is, human oversight is critical for decisions that carry high stakes. A tiered oversight model works well:

- Low Complexity: Autonomous operation with occasional human sampling.

- Medium Complexity: Automated assessments with 5–10% human sampling.

- High Complexity: Mandatory expert review for sensitive cases, such as those involving vulnerable individuals, significant financial transactions, or novel situations.

Systems should include multiple intervention points. For example, operators might need to take over an entire session, reverse specific outputs, or disable certain model functions. Circuit-breakers can automatically halt processes if the AI deviates from safety guidelines.

The Air Canada case highlights what happens without proper oversight. The tribunal found the company at fault due to the lack of escalation mechanisms or overrides to catch errors. As Christopher Rivers, a Tribunal Member, stated:

"Air Canada does not explain why it should not be held responsible for information provided by its agent".

To avoid such pitfalls, implement confidence-threshold escalation. For instance, AI systems should only proceed with decisions when a confidence score of 90% or higher is reached. Anything below that threshold should be flagged for human review. Maintain a version-controlled list of topics - like legal issues, medical advice, or pricing exceptions - that always require human intervention. When escalating, provide human reviewers with detailed context packages, including conversation history, confidence scores, and tool usage. This ensures decisions are made quickly and with all the necessary information.

Phased Implementation of AI in Disaster Recovery

Introducing AI into regulated industries requires a careful, step-by-step approach. Rushing this process can lead to three times more compliance issues by 2027. Before starting, it's crucial to classify use cases based on regulatory exposure, data sensitivity, and operational risks. From the outset, organizations should establish centralized AI controls, role-based access management, and immutable audit logs. As Tue Nguyen, Chief AI Officer at Savvycom, explains:

"In regulated industries, AI readiness is less about model accuracy and more about governance architecture".

This phased strategy strengthens disaster recovery compliance by gradually building trust and oversight.

Advisory Phase: AI as a Decision-Support Tool

The first step is to use AI as a support tool, enhancing recovery processes while ensuring compliance. In this phase, AI focuses on analyzing data, identifying patterns, and providing recommendations, leaving humans in full control of decisions.

One example comes from a Thailand-based bank that implemented an AI-driven wealth management platform. The system tracked market trends and suggested portfolio adjustments, but financial advisors reviewed and approved all recommendations before reaching out to clients. This approach led to a 25% increase in client engagement and a 20% boost in revenue. Similarly, a Korean logistics company introduced an AI-powered contract review system that flagged critical clauses and risks. Legal staff reviewed the flagged sections, achieving 95% accuracy and cutting review times by 50% for over 1,000 contracts each month.

Starting with low-risk applications, like document processing, allows organizations to gain confidence and gather performance data before tackling more complex areas, such as credit underwriting or clinical decision-making.

Semi-Automated Phase: Limited Autonomy with Human Approval

Once the advisory phase proves reliable, organizations can expand AI's role to automate low-risk, repetitive tasks while retaining human oversight for high-stakes decisions. This hybrid model uses "approval gates" where human sign-off is required before AI takes significant actions.

Clear boundaries for AI's role and conditions for human approval are essential. As Sucheta Rathi from Techment notes:

"The core question is not whether AI agents can operate in regulated industries... The real question is how to design [them] so that governance, auditability, compliance, and accountability are embedded by default".

Confidence thresholds can also guide decision-making. For instance, if AI certainty falls below 90%, the system should automatically escalate decisions to human reviewers.

A U.S. legal services firm applied this approach with an AI tool for insurance claim reviews. The AI processed data from 500+ files monthly using RAG architecture, while lawyers used AI-generated summaries as input for final decisions. This saved 20 hours per week per team member, reducing document review time by 60%.

To manage human attention effectively, create tiers of alerts:

- Critical alerts demand immediate action.

- Elevated alerts require attention during business hours.

- Informational alerts can be reviewed periodically.

Additionally, human supervisors must have the ability to override AI decisions or halt processes if anomalies arise.

Fully Automated Phase: Building Trust Through Data-Driven Validation

Reaching full automation requires rigorous testing and ongoing monitoring. By 2028, Agentic AI is expected to manage 15% of enterprise decisions, up from almost none in 2024. However, full autonomy doesn't mean sacrificing transparency - systems must demonstrate reliability with continuous telemetry, drift detection, and adversarial testing.

To ensure traceability, record all inputs, outputs, confidence scores, and performance metrics with timestamps and model versions. Automated dashboards can monitor for issues like model drift or hallucinations. For instance, studies in 2024 found GPT-3.5 hallucinated 39.6% of references, compared to 28.6% for GPT-4. Automated circuit breakers should be in place to halt operations if the system exceeds safety thresholds.

Before implementing full automation, organizations should conduct mock audits, simulating regulatory reviews to test documentation and oversight procedures. Synthetic stress tests can also help identify edge cases and potential failures, providing evidence for compliance reviews. By continuously validating AI performance, organizations ensure disaster recovery processes remain aligned with strict regulations. Companies that scale AI effectively are 1.5 times more likely to outperform competitors financially.

Bob Janacek from DataMotion highlights the balance required:

"Autonomy doesn't mean opacity. It means creating systems that can act independently, but also know when, how, and with whom to involve human judgment".

Full automation doesn't eliminate human roles - it shifts them to strategic oversight, enabling humans to guide and supervise rather than act as bottlenecks.

Security and Data Protection in AI Systems

The stakes for AI security are enormous. On average, AI-related security failures cost a staggering $4.8 million per incident and take 290 days to resolve - 83 days longer than traditional breaches. In industries with strict regulations, the risks multiply. HIPAA violations continue to result in hefty fines, and regulatory scrutiny has intensified. Between 2023 and 2025, compliance violations related to AI surged by 187%, with the financial sector facing average fines of $35.2 million. Michael Rodriguez, Compliance Director at ZeroShare, highlights the shifting landscape:

"2026 marks a turning point in AI regulation. The EU AI Act is in full enforcement [and] HIPAA's Security Rule modernization introduces prescriptive AI requirements".

To address these challenges, organizations must deploy layered security measures that safeguard data during storage, processing, and transmission. This includes securing vulnerabilities across multiple levels: the AI model itself (like preventing prompt injection or hallucinations), the application layer, and the infrastructure, such as VPC configurations and encryption protocols. Strong encryption and access controls are essential across all components of AI systems.

Encrypting Data in Transit and at Rest

Encryption is a cornerstone of AI data protection. Sensitive information stored in databases, file systems, or AI training datasets must be encrypted using AES-256. For data in transit - like communication between AI agents, microservices, and external APIs - TLS 1.2 is the baseline standard, though TLS 1.3+ is preferred to guard against man-in-the-middle attacks. To further enhance security, organizations should use VPC Endpoints (PrivateLink) to keep AI inference calls (e.g., those to AWS Bedrock or SageMaker) on private networks instead of the public internet.

Encryption key management is another critical area. Opt for customer-managed solutions like AWS KMS to retain full control over data access and enforce key rotation schedules. In healthcare, a de-identification layer should be added to scrub sensitive identifiers from prompts using tools like Regex or NER before data is sent to third-party LLM providers or logging systems. Every service interacting with protected health information (PHI) must be covered by a Business Associate Agreement (BAA). Keep an updated BAA inventory and review it annually to ensure that third-party services, including vector databases and monitoring tools, remain compliant.

But encryption is only part of the equation - controlling who can access this data is just as critical.

Implementing Strict Identity and Access Management (IAM)

Strong identity controls are essential for securing sensitive AI operations, especially in regulated industries. IAM protocols must align with specific frameworks: the EU AI Act emphasizes traceability and human oversight for high-risk systems, NIST AI RMF focuses on identity-binding and policy enforcement, and HIPAA mandates role-based access control throughout the AI pipeline.

Adopting Zero Trust principles for AI pipelines means enforcing continuous authentication and resource-level authorization for every action taken by an AI agent. As Bitline Security puts it:

"Every identity - human or machine - should have access only to the data it needs, and only for as long as necessary".

Before granting an AI agent access to sensitive systems, implement multi-factor authentication (MFA) or cryptographic keys. This is particularly important as 23% of IT professionals report AI agents exposing access credentials, with 80% observing unauthorized actions. Additionally, all IAM-related events - such as authentication attempts, privilege escalations, and access denials - must be logged in tamper-proof storage for at least six years to meet HIPAA standards. These logs ensure that every action is traceable, a critical requirement for audits.

Conducting Regular Security Assessments

AI systems are not static. A model deemed secure at deployment can become risky over time due to changes in data or user behavior. Static audits won’t cut it - continuous monitoring is vital. Automated "watcher" agents can detect issues early and provide traceable evidence for auditors. As Agentix Labs explains:

"A model that was compliant at deployment can drift into risky outcomes. Continuous monitoring catches this drift early and produces audit trails that regulators expect".

Organizations should conduct quarterly vulnerability assessments focused on AI components and perform annual penetration tests that include AI-specific attack vectors like prompt injection. Formal risk analyses should be carried out before deployment and revisited annually to evaluate model behavior, data flow risks (such as logging and caching), and incident response readiness.

Adversarial testing is another key step. Test AI systems against threats like prompt injection, data poisoning, and model extraction attempts. BeyondScale Team warns:

"Prompt injection is not just a security concern - it is a HIPAA concern. If an attacker can manipulate your AI agent into revealing PHI from its context window, that is a data breach".

Store all AI decision logs and access events in write-once-read-many (WORM) storage to prevent tampering. Internal reviews should be conducted quarterly, and mock regulatory audits should be simulated to identify gaps in documentation and readiness. For healthcare organizations, isolate components handling sensitive data and audit de-identification services that process PHI before it leaves secure environments. With 73% of organizations experiencing at least one AI-related security incident in the past year, proactive assessments are indispensable for safeguarding information and maintaining compliance.

For tailored guidance on securing AI systems in regulated industries, NAITIVE AI Consulting Agency (https://naitive.cloud) offers expert consulting services to help organizations design and manage secure, compliant AI disaster recovery systems.

Conclusion

Creating AI systems for regulated industries demands more than just technical know-how - it requires prioritizing compliance from the very beginning. Companies that integrate transparency, human oversight, and security into their AI frameworks experience 40% fewer AI-related security incidents and reduced remediation costs. As Optimum succinctly states:

"AI compliance isn't a checkbox - it's a foundation".

Adopting a phased approach - starting with decision-support systems before moving to full automation - helps validate security controls and boosts audit readiness by as much as 50%. This measured strategy consistently delivers better results than rushing to innovate.

Strong security measures are non-negotiable to prevent breaches and hefty penalties. With the average fine for data protection violations reaching $4.4 million, implementing encryption, strict identity management, and continuous monitoring is essential.

These practices not only mitigate risks but also prepare organizations to navigate an evolving regulatory environment. For instance, as frameworks like the EU AI Act continue to shape compliance standards, adaptability becomes key. By early 2026, more than 50% of compliance officers were actively using or testing AI, a significant increase from just 30% in 2023. This shift underscores the growing role of AI in regulated sectors.

For businesses focused on building resilient AI systems and ensuring compliance, expert guidance can make all the difference. NAITIVE AI Consulting Agency (https://naitive.cloud) provides tailored solutions for regulated industries, helping organizations integrate AI technologies while maintaining the oversight and security that regulators require.

FAQs

How do we identify high-risk AI disaster recovery use cases?

High-risk use cases are determined by examining their potential effects on safety, compliance, and operations. Key considerations include the seriousness of possible outcomes, the level of sensitivity of the data being processed, and the relevant regulatory standards. This evaluation helps highlight systems that demand immediate focus - like those managing critical decisions or handling regulated data.

What logs are needed to prove AI decisions were compliant during recovery?

To ensure compliance during recovery, it's crucial to keep detailed audit trails. These records should capture essential elements like key events, data inputs and outputs, model decisions, and system interactions. Thorough logging provides traceability and accountability, making it easier to address regulatory audits and meet compliance standards efficiently.

How can we use AI in disaster recovery without exposing sensitive data?

Using AI for disaster recovery comes with a critical responsibility: protecting sensitive data. To do this effectively, you need to implement strong security practices.

One key approach is data de-identification. Techniques like anonymization or pseudonymization help protect personally identifiable information (PII) and protected health information (PHI). These methods make it harder to trace data back to individuals, reducing privacy risks.

Additionally, enforce strict access controls to limit who can view or manipulate sensitive data. Combine this with encryption - both during transmission and while stored - to keep information secure from unauthorized access. Audit logging is another essential tool, as it helps track access and usage, ensuring transparency and accountability.

By adopting these strategies, you can protect sensitive data, stay compliant with regulations, and maintain trust - all while harnessing AI’s potential for disaster recovery.